Although I work almost 100% with Linux these days, I have a few Windows applications I find useful (including IrfanView for quick image edits and OCR -- much simpler, albeit less powerful, than GIMP) and PathSync for synchronizing local directories with my thumb drives. I also find PDF-XChange Viewer handy for adding annotations to PDF files. All these programs run fine under Wine, with one small "gotcha". When I install them (by downloading the Windows installer and then running "wine name-of-installer.exe"

in a terminal), shortcuts to them are added to the Wine submenu of the

GNOME menu ... but the shortcuts are often dysfunctional. They attempt

to launch the Windows .lnk

file added to the (virtual) Windows start menu, and typically I either

get a "file not found" pop-up or the launch simply fails silently.

The

solution I have found is to manually edit the shortcuts. Suppose that I

am logged in as user "paul" and I've just installed IrfanView. My

first step is to track down the Windows path to the executable.

Assuming defaults were used (generally a good thing when installing

software under Wine), I open a terminal and drill down to /home/paul/.wine/drive-c/Program\ Files, where I find the IrfanView directory and, in that, the i_view32.exe executable.

The next step is to run

env WINEPREFIX="/home/paul/.wine" wine "C:\Program Files\IrfanView\i_view32.exe"

in

a terminal and make sure that it launches correctly. Note that the

path to the executable is written Windows-style (backslashes, no

escaping things) in quotation marks. If the program launches properly, I

close it, copy the entire line to the clipboard, find the launcher in

the GNOME menu, right-click and choose "Edit properties", and replace the contents of the "Command:" field with the contents of the clipboard. I also confirm that launcher type is "Application" and not "Application in Terminal". With that done, I close the

launcher edit panel and test it. Sometimes it seems to take a while

before the menu detects and adopts the change I just made. I'm not

sure, shy of logging out (overkill?), what the best way to impose the

change immediately is. I've tried "killall gnome-panel" (possibly also overkill) with mixed success.

Thursday, December 29, 2011

Thursday, December 22, 2011

Extracting Variables in CPLEX

It's never happened to me, but apparently some CPLEX users (working with one of the programming APIs) inherit a fully formed problem, an instance of IloCplex or perhaps IloModel, without having access to the Concert code that constructed it. You only need the problem object to solve it and get the solution status and objective value; but in order to get the values of the variables in an optimal solution, you need to pass either an array of variables (IloNumVar[]) or individual variables in function calls (so that CPLEX can return values matched to the corresponding variables). If all you have is the problem object, how do you know what the variables are?

This has been asked more than once on help forums, so I list below a Java function that I believe will extract all variables from an instance of IloCplex. Something quite similar should work in the C++ API, and hopefully if you use the C API you can make the leap from the Java code. The Python API seems to provide a VariablesInterface class that I think provides a mechanism (if it's even needed in Python -- I don't really know). I'm blissfully ignorant about the Matlab API.

I've tried to stress-test the Java code, but nonetheless you use it at your own peril.

This has been asked more than once on help forums, so I list below a Java function that I believe will extract all variables from an instance of IloCplex. Something quite similar should work in the C++ API, and hopefully if you use the C API you can make the leap from the Java code. The Python API seems to provide a VariablesInterface class that I think provides a mechanism (if it's even needed in Python -- I don't really know). I'm blissfully ignorant about the Matlab API.

I've tried to stress-test the Java code, but nonetheless you use it at your own peril.

private IloNumVar[] parse(IloCplex cplex) throws IloException { HashSet<IloNumVar> vars = new HashSet<IloNumVar>(); Iterator it = cplex.iterator(); IloLinearNumExpr expr; IloLinearNumExprIterator it2; while (it.hasNext()) { IloAddable thing = (IloAddable) it.next(); if (thing instanceof IloRange) { expr = (IloLinearNumExpr) ((IloRange) thing).getExpr(); it2 = expr.linearIterator(); while (it2.hasNext()) { vars.add(it2.nextNumVar()); } } else if (thing instanceof IloObjective) { expr = (IloLinearNumExpr) ((IloObjective) thing).getExpr(); it2 = expr.linearIterator(); while (it2.hasNext()) { vars.add(it2.nextNumVar()); } } else if (thing instanceof IloSOS1) { vars.addAll(Arrays.asList(((IloSOS1) thing).getNumVars())); } else if (thing instanceof IloSOS2) { vars.addAll(Arrays.asList(((IloSOS2) thing).getNumVars())); } else if (thing instanceof IloLPMatrix) { vars.addAll(Arrays.asList(((IloLPMatrix) thing).getNumVars())); } } IloNumVar[] varray = vars.toArray(new IloNumVar[1]); return varray; }

Update: I have updated the code, incorporating some suggestions I received on a CPLEX forum. The parser is now a static method in a class of its own. You can download the source code (one file, plain text) from Google Docs. To use it, you will likely want to change the package name. Also feel free to delete the various print statements, which are there only for demonstration purposes.

Wednesday, December 7, 2011

Indexing an Array with a Variable

Sometimes, in the context of a mathematical program, someone wants to use a variable $x$ to index a vector $y$, as in \[z = y[x].\] As a starting point, we should assume that $x$ is an integer variable whose domain equals, or at least is a subset of, the index set of $y$. If you try to set $z=y[2.71828]$, or $z=y[5]$ when $y$ is indexed by ${1,\dots,4}$, you should expect Bad Things To Happen.

With that stipulation, $z=y[x]$ poses no problem in a constraint program. It cannot, however, be expressed directly in a mathematical program. If the domain of $x$ is not too large, it can be implemented somewhat obliquely in a mathematical program using binary variables.

Let's assume that $y$ is indexed over $1,\dots,N$ (with $N$ known at the outset), and that $y$ is a parameter. (We'll treat the case where $y$ is a variable in a minute.) Create $N$ binary variables $x_1,\dots,x_N$ and add the constraint \[x_1+\dots+x_N=1,\] which makes $\{x_1,\dots,x_N\}$ a type 1 special ordered set (SOS1). Then define $z$ via \[z=\sum_{i=1}^N y[i]*x_i.\]

You can do exactly this when $y$ is a vector of variables, but it adds a nonconvex quadratic constraint to the model, which likely prevents you from finding a guaranteed optimum, and greatly restricts what algorithms/software you can use. If we know a priori lower and upper bounds for $y$, say \[L_i \le y[i] \le U_i \forall i\in {1,\dots,N}\] with $L_i$ and $U_i$ parameters, we can add the $x_i$ as above and use the following set of constraints to define $z$: \[\begin{eqnarray*}y[i] -z \le (U_i - \hat{L})(1-x_i) \forall i\in {1,\dots,N}\\ y[i] - z \ge (L_i - \hat{U})(1-x_i) \forall i\in {1,\dots,N}\end{eqnarray*}\]where $\hat{L}=\min_{i=1,\dots,N} L_i$ and $\hat{U}=\max_{i=1,\dots,N} U_i$.

With that stipulation, $z=y[x]$ poses no problem in a constraint program. It cannot, however, be expressed directly in a mathematical program. If the domain of $x$ is not too large, it can be implemented somewhat obliquely in a mathematical program using binary variables.

Let's assume that $y$ is indexed over $1,\dots,N$ (with $N$ known at the outset), and that $y$ is a parameter. (We'll treat the case where $y$ is a variable in a minute.) Create $N$ binary variables $x_1,\dots,x_N$ and add the constraint \[x_1+\dots+x_N=1,\] which makes $\{x_1,\dots,x_N\}$ a type 1 special ordered set (SOS1). Then define $z$ via \[z=\sum_{i=1}^N y[i]*x_i.\]

You can do exactly this when $y$ is a vector of variables, but it adds a nonconvex quadratic constraint to the model, which likely prevents you from finding a guaranteed optimum, and greatly restricts what algorithms/software you can use. If we know a priori lower and upper bounds for $y$, say \[L_i \le y[i] \le U_i \forall i\in {1,\dots,N}\] with $L_i$ and $U_i$ parameters, we can add the $x_i$ as above and use the following set of constraints to define $z$: \[\begin{eqnarray*}y[i] -z \le (U_i - \hat{L})(1-x_i) \forall i\in {1,\dots,N}\\ y[i] - z \ge (L_i - \hat{U})(1-x_i) \forall i\in {1,\dots,N}\end{eqnarray*}\]where $\hat{L}=\min_{i=1,\dots,N} L_i$ and $\hat{U}=\max_{i=1,\dots,N} U_i$.

Saturday, November 26, 2011

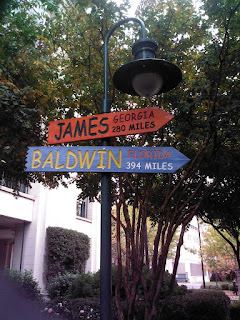

Charlotte's Literary Park

Across the street from the Charlotte (NC) Convention Center, site of the recent INFORMS conference, is a small park/shopping area that I think is known as "The Green". It contains a bit of statuary and some rather amusing "street signs" that I thought were worth remembering. In no particular order (and demonstrating no photographic skill whatsoever), here are some shots I snapped with my tablet's camera.

These two sculptures flank the entrance to the park:

(Sorry about the largely illegible engraving; the sun deserted me in my hour of need.) Toward the middle of the park, we have this somewhat curious choice:

I'm not sure what (if anything) they have to do with literature, and I could swear they were extras in an episode of Voyage to the Bottom of the Sea (a major contributor to my aversion to fish in restaurants).

Now for the lamp posts:

There was one other signpost, which I did not shoot because no angle did it justice. It had a half dozen or so signs pointing to other cities and towns named "Charlotte" (including Michigan's Charlotte, mispronounced "shar-LOT" by the locals). I have to wonder whether having a sign already devoted to the name "Charlotte" is why Charlotte Bronte did not earn a lamp post. (Alternate theories: Texas has the only town named "Bronte" in the U.S.; or Emily's shade bribed someone to one-up her sister.)

These two sculptures flank the entrance to the park:

(Sorry about the largely illegible engraving; the sun deserted me in my hour of need.) Toward the middle of the park, we have this somewhat curious choice:

I'm not sure what (if anything) they have to do with literature, and I could swear they were extras in an episode of Voyage to the Bottom of the Sea (a major contributor to my aversion to fish in restaurants).

Now for the lamp posts:

There was one other signpost, which I did not shoot because no angle did it justice. It had a half dozen or so signs pointing to other cities and towns named "Charlotte" (including Michigan's Charlotte, mispronounced "shar-LOT" by the locals). I have to wonder whether having a sign already devoted to the name "Charlotte" is why Charlotte Bronte did not earn a lamp post. (Alternate theories: Texas has the only town named "Bronte" in the U.S.; or Emily's shade bribed someone to one-up her sister.)

Friday, November 25, 2011

Android-Linux File Transfers

I recently acquired a Toshiba Thrive tablet (Android Honeycomb, currently version 3.2.1), in part because it comes with two USB ports (one full size, one mini). The USB ports theoretically allow me to move files between my Ubuntu PC and the tablet by mounting the tablet as a disk drive on the PC. I say "theoretically" because Google recently moved to Media Transfer Protocol for handling connections over USB. This seems to be okay for Windows PCs and Macs, but for Ubuntu (and other Linux systems) it requires using the mtpfs package. I've found connections to be excruciating slow, and for some reason (probably a setting I'm missing), when I do get connected, all I see is an empty root and an empty Playlists subdirectory. Trust me, there are tons of files on the Thrive.

Fortunately, I found WebSharingLite File/Media Sync, a free app in the Android Market. (As one might expect from the "Lite" part, there is a paid version, rather economically priced.) I have a WiFi DSL gateway at home (and of course my laptop has WiFi). Running WebSharingLite on the tablet, I can connect via a web browser from my PC or laptop and upload/download files considerably faster than what mtpfs over USB seems to allow. (Again, though, I should stress that there's no guarantee I'm setting up mtpfs correctly, despite hours of poking around the web looking for help.) The connection requires a password; coupled with the fact that it's on a nonroutable segment, behind the firewall in my gateway, I think the security is adequate.

I can definitely recommend WebSharingLite.

Fortunately, I found WebSharingLite File/Media Sync, a free app in the Android Market. (As one might expect from the "Lite" part, there is a paid version, rather economically priced.) I have a WiFi DSL gateway at home (and of course my laptop has WiFi). Running WebSharingLite on the tablet, I can connect via a web browser from my PC or laptop and upload/download files considerably faster than what mtpfs over USB seems to allow. (Again, though, I should stress that there's no guarantee I'm setting up mtpfs correctly, despite hours of poking around the web looking for help.) The connection requires a password; coupled with the fact that it's on a nonroutable segment, behind the firewall in my gateway, I think the security is adequate.

I can definitely recommend WebSharingLite.

Back to Back Conferences

I'm back from two conferences in consecutive weeks: INFORMS (Charlotte NC) and DSI (Boston). (The link to the DSI meeting is a bit ephemeral -- it will eventually point to the 2012 meeting.) Two conferences in two weeks is a bit hard on both the waistline and the brain. I have a few odds and ends to share.

Repeating something I posted on the INFORMS conference blog, I found out from Peter Horner (editor of Analytics Magazine) that a recent change in the magazine’s URL (from analytics-magazine.com to analytics-magazine.org) may have broken some people’s RSS subscriptions to the web site, as well as screwing up the site's Google ranking. If you subscribe to the magazine's RSS feed, you might want to test the feed and resubscribe if necessary. If you're unfamiliar with the magazine, it is worth taking a look (and, if you choose, using the RSS button at the lower left to subscribe). For readers of this blog who are active on social networks, please help spread the word.

Changing gears, in a previous post I wrote about PowerPoint users suffering from invisible math formulas when they ran their presentations on someone else's laptop at a conference. Out of curiosity, I made a list at the INFORMS meeting of how many presenters I saw using different types of software. My classification system was a bit fuzzy. Beamer presentations tend to be distinctive, but PowerPoint presentations are not as obviously PowerPoint unless you see the presenter launching them from the PowerPoint program. Similarly, the only ways I have to determine that a presentation was done in Keynote (Apple's presentation software) are if it contains tons of eye candy, or it is being played from an MOV file, or if I see the presenter using a Mac. Besides Beamer, there are several other ways to produce presentations in LaTeX (including FoilTeX and Powerdot), and I don't know how to tell the end products of those apart. So my classification systems was Beamer, PowerPoint, Keynote or "Other" (where "Other" could include any misclassified presentations).

As it turns out, I did not see any presentations that I could detect were done in Keynote. (I think Tim Hopper might have used it for his portion of our panel session on social media, but I had my back to the screen when he was presenting.) My final count was 24 Beamer files, 9 PowerPoint files and 8 "Other" files.

I came away with two conclusions. The first is that PowerPoint may be losing market share among the INFORMS crowd, since a few years ago my informal survey had an even split between Beamer and PowerPoint. Then again, this may reflect a decrease in the number of US presenters at INFORMS, since LaTeX (and Beamer) seem to be more popular among European and possibly Asian users than among Americans. PowerPoint was pretty ubiquitous at the DSI meeting (which is populated mainly with business school faculty and doctoral students). The second conclusion is that either PowerPoint has gotten better or people have gotten better at using it: there were no missing formulas in any of the papers I saw presented.

Repeating something I posted on the INFORMS conference blog, I found out from Peter Horner (editor of Analytics Magazine) that a recent change in the magazine’s URL (from analytics-magazine.com to analytics-magazine.org) may have broken some people’s RSS subscriptions to the web site, as well as screwing up the site's Google ranking. If you subscribe to the magazine's RSS feed, you might want to test the feed and resubscribe if necessary. If you're unfamiliar with the magazine, it is worth taking a look (and, if you choose, using the RSS button at the lower left to subscribe). For readers of this blog who are active on social networks, please help spread the word.

Changing gears, in a previous post I wrote about PowerPoint users suffering from invisible math formulas when they ran their presentations on someone else's laptop at a conference. Out of curiosity, I made a list at the INFORMS meeting of how many presenters I saw using different types of software. My classification system was a bit fuzzy. Beamer presentations tend to be distinctive, but PowerPoint presentations are not as obviously PowerPoint unless you see the presenter launching them from the PowerPoint program. Similarly, the only ways I have to determine that a presentation was done in Keynote (Apple's presentation software) are if it contains tons of eye candy, or it is being played from an MOV file, or if I see the presenter using a Mac. Besides Beamer, there are several other ways to produce presentations in LaTeX (including FoilTeX and Powerdot), and I don't know how to tell the end products of those apart. So my classification systems was Beamer, PowerPoint, Keynote or "Other" (where "Other" could include any misclassified presentations).

As it turns out, I did not see any presentations that I could detect were done in Keynote. (I think Tim Hopper might have used it for his portion of our panel session on social media, but I had my back to the screen when he was presenting.) My final count was 24 Beamer files, 9 PowerPoint files and 8 "Other" files.

I came away with two conclusions. The first is that PowerPoint may be losing market share among the INFORMS crowd, since a few years ago my informal survey had an even split between Beamer and PowerPoint. Then again, this may reflect a decrease in the number of US presenters at INFORMS, since LaTeX (and Beamer) seem to be more popular among European and possibly Asian users than among Americans. PowerPoint was pretty ubiquitous at the DSI meeting (which is populated mainly with business school faculty and doctoral students). The second conclusion is that either PowerPoint has gotten better or people have gotten better at using it: there were no missing formulas in any of the papers I saw presented.

Friday, November 11, 2011

Blogging at INFORMS 2011

I volunteered to be a guest blogger at the 2011 INFORMS national meeting in Charlotte, NC. I haven't even hopped a plane yet, and I've already spammed the blog (er, posted) once. You can find the post, if you're curious, at http://meetings2.informs.org/charlotte2011/blog/?p=145. There is quite a cast of bloggers for the meeting, so if you are attending (or just interested), I recommend subscribing to the RSS feed.

Monday, November 7, 2011

Mint/Ubuntu Update Broke Printing

Today I was suddenly unable to use my home HP ink jet printer with Linux Mint, despite the fact that this morning it was printing fine from Windows (my system is dual-boot). The error message informed me that /usr/lib/cups/backend/hp had failed for unspecified reasons. Toggling the printer off and back on did not help, nor did rebooting the system, nor did switching the driver.

A quick web search, however, revealed the fact that some unspecified update had screwed up permissions on the /usr/lib/cups/backend directory (restricting it to root only). Most of the executables in that directory looked okay, but the permissions for cups-pdf were also root only, so I'm guessing that it was an update to cups-pdf that blew things up.

The solution: sudo chmod -R 755 /usr/lib/cups/backend.

I thought Microsoft had a patent on destructive updates. Guess not.

A quick web search, however, revealed the fact that some unspecified update had screwed up permissions on the /usr/lib/cups/backend directory (restricting it to root only). Most of the executables in that directory looked okay, but the permissions for cups-pdf were also root only, so I'm guessing that it was an update to cups-pdf that blew things up.

The solution: sudo chmod -R 755 /usr/lib/cups/backend.

I thought Microsoft had a patent on destructive updates. Guess not.

Saturday, October 29, 2011

Scalable Images in Blogger

Questions about a recent post containing a couple of flow charts made me regret the fact that Blogger does not support SVG graphics. Although I made the charts using the excellent TiKZ LaTeX package (which produces vector output in the form of a PDF file), I had to convert the PDF output to PNG for Blogger, and the images are a tad fuzzy (and do not scale well when zoomed), which may have led to some confusion. So I was on a mission today to find a workaround.

The first thing I discovered that SVG is not nirvana. I generated the images as separate PDF files from the TiKZ source. (Hint to people doing this: use the LaTeX standalone document class and the output file will automatically be cropped to the smallest rectangle containing the image.) I then used ImageMagick to convert the PDF files to SVG files ... which blew them up from around 54 KB to about 8+ MB. Each. Oops! I tried a tool I found to convert the SVG files to "canvas" files, which got me another 5x or so increase in size. So back to PDF files. Blogger does not consider PDFs to be images, to the plan now is to use a PNG file as the image and link it to the corresponding PDF, allowing readers to click on the image and at least get a scalable version in a new window or tab.

Blogger does not allow you to upload arbitrary files, only images in formats it recognizes (which are actually stored in Picassa, another Google product/service). I thought about putting the PDFs on a personal server somewhere, but I worry about moving things (including them) to a new server at some future date and not remembering to update the links. Enter Google Documents. I just have to upload the PDFs to Google Docs, make them public, grab the URLs and set the links on the PNGs (which by default point to the PNG files in Picassa) to point to the PDFs in Google Docs instead.

I did this with the aforementioned post, and I think it's working. The one question mark for me is whether everyone can see them. I can, but Google knows I'm the owner. If either of my loyal readers runs into problems, or wants me to convert images from earlier posts, please drop a comment here.

The first thing I discovered that SVG is not nirvana. I generated the images as separate PDF files from the TiKZ source. (Hint to people doing this: use the LaTeX standalone document class and the output file will automatically be cropped to the smallest rectangle containing the image.) I then used ImageMagick to convert the PDF files to SVG files ... which blew them up from around 54 KB to about 8+ MB. Each. Oops! I tried a tool I found to convert the SVG files to "canvas" files, which got me another 5x or so increase in size. So back to PDF files. Blogger does not consider PDFs to be images, to the plan now is to use a PNG file as the image and link it to the corresponding PDF, allowing readers to click on the image and at least get a scalable version in a new window or tab.

Blogger does not allow you to upload arbitrary files, only images in formats it recognizes (which are actually stored in Picassa, another Google product/service). I thought about putting the PDFs on a personal server somewhere, but I worry about moving things (including them) to a new server at some future date and not remembering to update the links. Enter Google Documents. I just have to upload the PDFs to Google Docs, make them public, grab the URLs and set the links on the PNGs (which by default point to the PNG files in Picassa) to point to the PDFs in Google Docs instead.

I did this with the aforementioned post, and I think it's working. The one question mark for me is whether everyone can see them. I can, but Google knows I'm the owner. If either of my loyal readers runs into problems, or wants me to convert images from earlier posts, please drop a comment here.

Friday, October 21, 2011

Time Series, Causality and Renting a Congressman

This morning I was reading an article, in a national (US) news magazine, that discussed energy policy decisions. It mentioned executives of companies in various energy-related industries contributing to the coffers of various congressmen (from both parties), and it mentioned their votes on key policy issues. The implication was clear: the votes were being bought, or at least influenced, by interested parties.

I understand why writers do this: it spices up the articles (a hint of corruption) without making any explicit charges that cannot be backed up with hard evidence; and it appeals to voters looking for confirmation of their prior belief that congressmen are crooks. I tend to view our elected representatives as by and large venal to some extent myself. The last part of the title of this post is motivated by a quote from Simon Cameron (Abraham Lincoln's first Secretary of War): "An honest politician is one who, when he is bought, will stay bought." As you can tell from the title, I am not sanguine about finding honest politicians.

As an OR professional, though, I understand the flaw in the argument (if we can characterize what is really innuendo as an "argument"). It relates in part to confusing correlation with causation and in part with confusing the direction of causation. Let's say that our fearless reporter accurately reports that Representative A votes for subsidies for domestic production of buggy whips, and Ms. B, CEO of a large manufacturer of buggy whips, donates regularly and/or generously to Rep. A's election campaigns. With minimal effort choosing words, our reporter can make this sound as if A voted for subsidies because B (and perhaps others of a similar mind) paid him off. That is indeed one possibility.

Another possibility is that A voted for subsidies first and then B donated, recognizing A (from his vote) as a friend of the buggy whip industry. There's causation, but in the opposite direction from what the reporter implied, and nothing venal. The nature of political contributions is that you usually contribute to candidates with whom you agree. (I say usually because lobbyists sometimes contribute to both sides, hoping the winner will remember their contribution to her and not to her opponent.)

Yet another possibility is that B contributed to A first, either anticipating a pro-buggy whip platform or for reasons unrelated to industrial policy, and A favors subsidizing domestic production for sincerely held (if possibly misguided) reasons. This is a case of trying to infer causation from what may be mere correlation.

How can we tell whether A's vote is in fact being purchased? Anyone who has done much with statistics recognizes how hard it is to establish causality, especially in the absence of a designed experiment with an appropriate control group. We could try tracking A's votes over time, matching them with B's contributions (or the contributions of B and other pro-subsidy individuals or groups), and look for a positive cross-correlation with lag 1 or higher (so that swings in donations lead swings in votes). A positive cross-correlation would still not be solid proof of vote-buying, though, and might also lack "power" as a test of vote-buying: if A fits Cameron's definition of an honest politician, and consistently votes pro-buggy whip because he is taking graft, there will be no variance in his votes and therefore no cross-correlation.

This leaves me in the unsatisfying position of not knowing whether or not A is a crook. There have been enough US congressmen convicted of malfeasance to convince me that the proportion of occupants of Capitol Hill who are crooks is not zero. Still, there is also a well known aphorism (of uncertain provenance) that might apply: "Never ascribe to malice that which is adequately explained by incompetence." We have ample evidence that incompetence is not in short supply on the Hill.

I understand why writers do this: it spices up the articles (a hint of corruption) without making any explicit charges that cannot be backed up with hard evidence; and it appeals to voters looking for confirmation of their prior belief that congressmen are crooks. I tend to view our elected representatives as by and large venal to some extent myself. The last part of the title of this post is motivated by a quote from Simon Cameron (Abraham Lincoln's first Secretary of War): "An honest politician is one who, when he is bought, will stay bought." As you can tell from the title, I am not sanguine about finding honest politicians.

As an OR professional, though, I understand the flaw in the argument (if we can characterize what is really innuendo as an "argument"). It relates in part to confusing correlation with causation and in part with confusing the direction of causation. Let's say that our fearless reporter accurately reports that Representative A votes for subsidies for domestic production of buggy whips, and Ms. B, CEO of a large manufacturer of buggy whips, donates regularly and/or generously to Rep. A's election campaigns. With minimal effort choosing words, our reporter can make this sound as if A voted for subsidies because B (and perhaps others of a similar mind) paid him off. That is indeed one possibility.

Another possibility is that A voted for subsidies first and then B donated, recognizing A (from his vote) as a friend of the buggy whip industry. There's causation, but in the opposite direction from what the reporter implied, and nothing venal. The nature of political contributions is that you usually contribute to candidates with whom you agree. (I say usually because lobbyists sometimes contribute to both sides, hoping the winner will remember their contribution to her and not to her opponent.)

Yet another possibility is that B contributed to A first, either anticipating a pro-buggy whip platform or for reasons unrelated to industrial policy, and A favors subsidizing domestic production for sincerely held (if possibly misguided) reasons. This is a case of trying to infer causation from what may be mere correlation.

How can we tell whether A's vote is in fact being purchased? Anyone who has done much with statistics recognizes how hard it is to establish causality, especially in the absence of a designed experiment with an appropriate control group. We could try tracking A's votes over time, matching them with B's contributions (or the contributions of B and other pro-subsidy individuals or groups), and look for a positive cross-correlation with lag 1 or higher (so that swings in donations lead swings in votes). A positive cross-correlation would still not be solid proof of vote-buying, though, and might also lack "power" as a test of vote-buying: if A fits Cameron's definition of an honest politician, and consistently votes pro-buggy whip because he is taking graft, there will be no variance in his votes and therefore no cross-correlation.

This leaves me in the unsatisfying position of not knowing whether or not A is a crook. There have been enough US congressmen convicted of malfeasance to convince me that the proportion of occupants of Capitol Hill who are crooks is not zero. Still, there is also a well known aphorism (of uncertain provenance) that might apply: "Never ascribe to malice that which is adequately explained by incompetence." We have ample evidence that incompetence is not in short supply on the Hill.

Sunday, October 9, 2011

Benders Decomposition Then and Now

A couple of years ago, a coauthor and I had a paper under review at a prestigious journal that shall remain nameless. In the paper we solved a mixed integer linear program (MILP) using Benders decomposition, with CPLEX link as the solver, and we employed callbacks to check each potential solution to the master problem as it occurred. One of the reviewers asked me to justify the use of callbacks. My first inclination was a variation on “because it's standard practice”, but since I have not actually surveyed practitioners to confirm that it is standard (and because, as an academic, I'm naturally loquacious), I gave a longer explanation. What brought this to mind is a recent email exchange in which someone else asked me why I used callbacks with Benders decomposition. It occurred to me that, assuming I'm correct about it being the current norm, this may be a case of textbooks not having caught up to practice. So here is a quick (?) explanation.

The original paper by Jack Benders [1] dealt with both continuous and discrete optimization, linear and nonlinear. The application I have in mind here deals with problems that, without loss of generality, can be modeled as follows:

\[ \begin{array}{lrclcc} \textrm{minimize} & c_{1}'x & + & c_{2}'y\\ \textrm{subject to} & Ax & & & \ge & a\\ & & & By & \ge & b\\ & Dx & + & Ey & \ge & d\\ & x & \in & \mathbb{Z}^{m}\\ & y & \in & \mathbb{R}^{n} \end{array} \]

Benders decomposition splits this into a master problem and a subproblem. The subproblem must be a linear program (LP), so all the discrete variables have to go into the master problem (a MILP). You can optionally keep some of the continuous variables in the master, but I will not do so here. You can also optionally create multiple subproblems (with disjoint subsets of $y$), but again I will not do so here. Finally, in the spirit of keeping things simple (and because unbounded models are usually a sign of a lazy modeler), I'll assume that the original problem is bounded (if feasible), which implies that both the master and subproblem will be bounded (if feasible).

The master problem is as follows:

\[ \begin{array}{lrclccc} \textrm{minimize} & c_{1}'x & + & z\\ \textrm{subject to} & Ax & & & \ge & a\\ & g'x & + & z & \ge & f & \forall (g,f)\in\mathcal{O}\\ & g'x & & & \ge & f & \forall (g,f)\in\mathcal{F}\\ & x & \in & \mathbb{Z}^{m}\\ & z & \in & \mathbb{R} \end{array}. \]Variable $z$ acts as a surrogate for the objective contribution $c_{2}'y$ of the continuous variables. Set $\mathcal{O}$ contains what are sometimes known as “optimality cuts”: cuts that correct underestimation of $c_{2}'y$ by $z$. $\mathcal{F}$ contains what are sometimes known as “feasibility cuts”: cuts that eliminate solutions $x$ for which the subproblem is infeasible. Initially $\mathcal{O}=\emptyset=\mathcal{F}$. The subproblem, for a given $x$ feasible in the master problem, is: \[ \begin{array}{lrcl} \textrm{minimize} & c_{2}'y\\ \textrm{subject to} & By & \ge & b\\ & Ey & \ge & d-Dx\\ & y & \in & \mathbb{R}^{n} \end{array} \]Optimality cuts are generated using the dual solution to a feasible subproblem; feasibility cuts are generated using an extreme ray of the dual of an infeasible subproblem. The precise details are unnecessary for this post.

Back in the '60s ('70s, '80s), solvers were essentially closed boxes: you inserted a problem, set some parameters, started them and went off to read the collected works of Jane Austen. Intervening in the algorithms was not an option. Thus the original flowchart for Benders decomposition looked like the following.

The modern approach, using callbacks, looks like this:

Obviously the modern approach can only be implemented if you are using a solver that supports callbacks, and requires more advanced programming than the classical approach. Other than that, what are the advantages and disadvantages?

The main advantage of the callback approach is that it is likely to avoid considerable rework. In the original approach, each time you add a cut to the master problem, you have to solve it anew. Although the new cuts may change the structure of the solution tree (by changing the solver's branching decisions), you are probably going to spend time revisiting candidate solutions that you had already eliminated earlier. Moreover, you may actually encounter the optimal solution to the original problem and then discard it, because a superoptimal solution that is either infeasible in the original problem or has an artificially superior value of $z$ causes the true optimum to appear suboptimal. With the callback approach, you use a single search tree, never revisit a node, and never overlook a truly superior solution.

The potential disadvantage of the callback approach is that you potentially generate a cut each time a new incumbent is found, and some of those cuts quite possibly would have been avoided if you followed the classical approach (and added a cut only when an “optimal” solution was found). Modern solvers are good at carrying “lazy” constraints in a pool, rather than adding the cuts to the active constraint set, but there is still a chance that the modern approach will take longer than the classical approach. I believe (but have no data to support my belief) that the modern approach will typically prove the faster of the two.

[1] Benders, J. F., Partitioning Procedures for Solving Mixed-Variables Programming Problems, Numerische Mathematik 4 (1962), 238--252.

The original paper by Jack Benders [1] dealt with both continuous and discrete optimization, linear and nonlinear. The application I have in mind here deals with problems that, without loss of generality, can be modeled as follows:

\[ \begin{array}{lrclcc} \textrm{minimize} & c_{1}'x & + & c_{2}'y\\ \textrm{subject to} & Ax & & & \ge & a\\ & & & By & \ge & b\\ & Dx & + & Ey & \ge & d\\ & x & \in & \mathbb{Z}^{m}\\ & y & \in & \mathbb{R}^{n} \end{array} \]

Benders decomposition splits this into a master problem and a subproblem. The subproblem must be a linear program (LP), so all the discrete variables have to go into the master problem (a MILP). You can optionally keep some of the continuous variables in the master, but I will not do so here. You can also optionally create multiple subproblems (with disjoint subsets of $y$), but again I will not do so here. Finally, in the spirit of keeping things simple (and because unbounded models are usually a sign of a lazy modeler), I'll assume that the original problem is bounded (if feasible), which implies that both the master and subproblem will be bounded (if feasible).

The master problem is as follows:

\[ \begin{array}{lrclccc} \textrm{minimize} & c_{1}'x & + & z\\ \textrm{subject to} & Ax & & & \ge & a\\ & g'x & + & z & \ge & f & \forall (g,f)\in\mathcal{O}\\ & g'x & & & \ge & f & \forall (g,f)\in\mathcal{F}\\ & x & \in & \mathbb{Z}^{m}\\ & z & \in & \mathbb{R} \end{array}. \]Variable $z$ acts as a surrogate for the objective contribution $c_{2}'y$ of the continuous variables. Set $\mathcal{O}$ contains what are sometimes known as “optimality cuts”: cuts that correct underestimation of $c_{2}'y$ by $z$. $\mathcal{F}$ contains what are sometimes known as “feasibility cuts”: cuts that eliminate solutions $x$ for which the subproblem is infeasible. Initially $\mathcal{O}=\emptyset=\mathcal{F}$. The subproblem, for a given $x$ feasible in the master problem, is: \[ \begin{array}{lrcl} \textrm{minimize} & c_{2}'y\\ \textrm{subject to} & By & \ge & b\\ & Ey & \ge & d-Dx\\ & y & \in & \mathbb{R}^{n} \end{array} \]Optimality cuts are generated using the dual solution to a feasible subproblem; feasibility cuts are generated using an extreme ray of the dual of an infeasible subproblem. The precise details are unnecessary for this post.

Back in the '60s ('70s, '80s), solvers were essentially closed boxes: you inserted a problem, set some parameters, started them and went off to read the collected works of Jane Austen. Intervening in the algorithms was not an option. Thus the original flowchart for Benders decomposition looked like the following.

Obviously the modern approach can only be implemented if you are using a solver that supports callbacks, and requires more advanced programming than the classical approach. Other than that, what are the advantages and disadvantages?

The main advantage of the callback approach is that it is likely to avoid considerable rework. In the original approach, each time you add a cut to the master problem, you have to solve it anew. Although the new cuts may change the structure of the solution tree (by changing the solver's branching decisions), you are probably going to spend time revisiting candidate solutions that you had already eliminated earlier. Moreover, you may actually encounter the optimal solution to the original problem and then discard it, because a superoptimal solution that is either infeasible in the original problem or has an artificially superior value of $z$ causes the true optimum to appear suboptimal. With the callback approach, you use a single search tree, never revisit a node, and never overlook a truly superior solution.

The potential disadvantage of the callback approach is that you potentially generate a cut each time a new incumbent is found, and some of those cuts quite possibly would have been avoided if you followed the classical approach (and added a cut only when an “optimal” solution was found). Modern solvers are good at carrying “lazy” constraints in a pool, rather than adding the cuts to the active constraint set, but there is still a chance that the modern approach will take longer than the classical approach. I believe (but have no data to support my belief) that the modern approach will typically prove the faster of the two.

[1] Benders, J. F., Partitioning Procedures for Solving Mixed-Variables Programming Problems, Numerische Mathematik 4 (1962), 238--252.

Friday, September 30, 2011

OR, the Environment, and the Law of Unintended Consequences

The topic of the INFORMS blog challenge for September is "OR and the Environment", and I'm slipping this in just under the wire. My guess is that most if not all of the other challenge entries will extol some way in which the use of OR helps the environment. I shall be (slightly) contrarian here.

*****

Problem: Reduce the cost of shipping raw materials and manufactured goods by sea.

Solution: Companies use OR techniques to pack vessels more efficiently (reducing the number of loads), route vessels more efficiently (reducing transit times and, hopefully, total travel distances), etc.

Short-term Environmental Impact: Fewer ships covering less distance means less consumption of fossil fuels, so less air (and water) pollution.

Long-term Environmental Impact: Lower shipping costs make it more cost effective for manufacturers in Europe and the US to purchase materials and components from distant countries such as India and China, shifting the manufacturing operations from regions with relatively stringent environmental reg (medium environmental regulation) ulations to regions with more lax regulations. The increased volume being shipped by ocean more than offsets the reduction in distance per shipment and results in an increase in ocean traffic.

*****

Problem: Make alternative fuel sources for automobiles more cost-effective.

Solution: Employ OR techniques to improve the manufacture and distribution of gasohol (gasoline/alcohol mixtures), reducing the pump price and therefore expanding the consumption of gasohol.

Short-term Environmental Impact: Some of the demand for a non-renewable source (fossil fuels) with a relatively high pollutant output is shifted to a renewable source (crops such as corn) with a lower pollutant content.

Long-term Environmental Impact: Crops previously grown as animal feed or for human consumption are diverted to the more profitable biofuel production, causing shortages or price increases in food. In some countries, this causes farmers to clear forest areas for replanting with food crops. Forests capture carbon more efficiently than food crops do, and clearing a forest by burning it releases significant amounts of carbon. (There are also arguments in both directions as to whether biofuels actually produce a net gain in energy or reduce net pollution when the activities involved in growing the crops are factored in.)

*****

Does this mean that I think OR is bad for the environment? Not at all; but it's not automatically good for the environment either. What OR brings to the table that might be most important, in the context of environmental impact, is a systems perspective. Hopefully OR practitioners can help decision-makers view problems in sufficient breadth, and yet with sufficient (model-aided?) clarity, to recognize the secondary and tertiary effects of their choices. That still leaves the issue of getting environmental impact on the table as a criterion for evaluating choices -- which is a political, not mathematical problem.

*****

Problem: Reduce the cost of shipping raw materials and manufactured goods by sea.

Solution: Companies use OR techniques to pack vessels more efficiently (reducing the number of loads), route vessels more efficiently (reducing transit times and, hopefully, total travel distances), etc.

Short-term Environmental Impact: Fewer ships covering less distance means less consumption of fossil fuels, so less air (and water) pollution.

Long-term Environmental Impact: Lower shipping costs make it more cost effective for manufacturers in Europe and the US to purchase materials and components from distant countries such as India and China, shifting the manufacturing operations from regions with relatively stringent environmental reg (medium environmental regulation) ulations to regions with more lax regulations. The increased volume being shipped by ocean more than offsets the reduction in distance per shipment and results in an increase in ocean traffic.

*****

Problem: Make alternative fuel sources for automobiles more cost-effective.

Solution: Employ OR techniques to improve the manufacture and distribution of gasohol (gasoline/alcohol mixtures), reducing the pump price and therefore expanding the consumption of gasohol.

Short-term Environmental Impact: Some of the demand for a non-renewable source (fossil fuels) with a relatively high pollutant output is shifted to a renewable source (crops such as corn) with a lower pollutant content.

Long-term Environmental Impact: Crops previously grown as animal feed or for human consumption are diverted to the more profitable biofuel production, causing shortages or price increases in food. In some countries, this causes farmers to clear forest areas for replanting with food crops. Forests capture carbon more efficiently than food crops do, and clearing a forest by burning it releases significant amounts of carbon. (There are also arguments in both directions as to whether biofuels actually produce a net gain in energy or reduce net pollution when the activities involved in growing the crops are factored in.)

*****

Does this mean that I think OR is bad for the environment? Not at all; but it's not automatically good for the environment either. What OR brings to the table that might be most important, in the context of environmental impact, is a systems perspective. Hopefully OR practitioners can help decision-makers view problems in sufficient breadth, and yet with sufficient (model-aided?) clarity, to recognize the secondary and tertiary effects of their choices. That still leaves the issue of getting environmental impact on the table as a criterion for evaluating choices -- which is a political, not mathematical problem.

Sunday, September 25, 2011

Fixing TiKZ/PGF to Work with Gnuplot

While working on a new presentation today (in Beamer), I grabbed a slide from a previous presentation (one I reuse annually). The slide contains PGF code that uses Gnuplot to plot functions (which I then annotate using PGF commands). Small problem: the transplanted slide didn't work (no curves on the plot) ... which led me to attempt to recompile the original presentation and discover that it, too, no longer worked. Grrr!

Poking in the files in the /tmp directory showed me that Gnuplot was crabbing about a command (set terminal table) that PGF uses to tell it to create a text file with the points to be plotted. A bit of searching led me to a blog post by

Toine Bogers containing the fix. Instructions for Linux follow (I haven't tried it on Windows yet):

I love open source software, but one of the challenges of using lots of separate pieces of software is that developers will change something in the latest version of one program or library, and that change will break some interaction with another program/library from an entirely separate developer. To modify a saying from my misspent youth: "You pays your money [or, in this case, you don't] and you takes your chances."

Poking in the files in the /tmp directory showed me that Gnuplot was crabbing about a command (set terminal table) that PGF uses to tell it to create a text file with the points to be plotted. A bit of searching led me to a blog post by

Toine Bogers containing the fix. Instructions for Linux follow (I haven't tried it on Windows yet):

- Open a terminal and run 'kpsewhich pgfmoduleplot.code.tex' to locate the file you need to edit. (The location varies with the LaTeX distribution you use; this takes the work out of finding it.)

- Issue 'sudo gedit <path>/pgfmoduleplot.code.tex' (where <path> is the path you found in the previous step) and give your password when prompted. (Feel free to use a different editor if gedit is not your cup of tea.)

- Find the line containing 'set terminal table' and delete the word 'terminal', so that it becomes 'set table' (the rest of the line staying unchanged).

- Save the modified file, and you should be good to go

I love open source software, but one of the challenges of using lots of separate pieces of software is that developers will change something in the latest version of one program or library, and that change will break some interaction with another program/library from an entirely separate developer. To modify a saying from my misspent youth: "You pays your money [or, in this case, you don't] and you takes your chances."

Saturday, September 24, 2011

Fixing CUPS-PDF

Not that I needed to find another bug in Mint 11 Katya/Ubuntu 11 Natty, but I just discovered that the "Print to PDF" printer (cups-pdf) was writing a 2.1 KB PDF file containing a single blank page, regardless of what I printed. A quick web search turned up multiple reports of this. I used Synaptic to reinstall the cups-pdf package, to no avail. The solution (which I found on a bug report web page) was to go into Control Center > Printing, delete the "Print to PDF" printer entirely, then use Server > New Printer to reinstall it. It seems to be working now. I wonder what the interarrival time for the next gremlin is. :-(

Thursday, September 15, 2011

Comment Spam

In the interest of free and open discussion (and because I've been known to make mistakes, which I hope someone will correct), I leave this blog open to comments from anyone, with no registration requirement. To date I've gotten very little comment spam. (If you're not sure what "comment spam" is, it's some self-promoting poster child for rectal problems leaving a comment that has nothing to do with the actual blog entry and everything to do with drawing attention to a website they own.) On the rare occasions when I do get comment spam, Blogger is fairly good about moving it to the spam file on its own.

That raises a point I should make for actual readers (and you both know who you are): if you write a comment and shortly thereafter it seems to have disappeared, don't worry. Blogger has moved it to the spam file for some reason, but I still get an email ping that the comment was posted. I always check comments to be sure they ended up in the right place, and if your comment was mistakenly classified as spam (this has happened at least once and at most twice in the history of the blog), I will restore it to its rightful place in the sunshine.

In the past couple of days, I've gotten the same comment spam message twice. Curiously, Blogger correctly identified it as spam the first time but not the second time. It's a link to "Team PotentiaMED" web site. The information blurb on their site is long on meaningless buzz phrases and a bit short on specifics, but I think they are some sort of consulting operation. I'll let you judge their merits for yourself, if you're curious. Personally, I automatically assume that any business relying on spam to get customers probably lacks solid reasons to patronize them (the sort of reasons that would make for an effective conventional marketing campaign). I also don't trust anyone who spams.

By way of contrast, the proprietors of Online Engineering Degree contacted me by email to ask permission to post a comment pointing to one of their pages, a list of Q&A sites. Instead, I devoted a short post to providing the link, since (a) I thought it might be relevant to readers with an interest in industrial engineering and (b) it was not really relevant as a comment to any particular existing post. A writer from Masters in Engineering wrote to me asking if I would like to mention a page listing forums and boards for engineers. The sites listed were potentially useful to engineers in general but did not seem very useful to IEs, so I declined. I want to give both of them credit for doing things the right way.

Now if I can just find a way to report comment spammers to Blogger ...

That raises a point I should make for actual readers (and you both know who you are): if you write a comment and shortly thereafter it seems to have disappeared, don't worry. Blogger has moved it to the spam file for some reason, but I still get an email ping that the comment was posted. I always check comments to be sure they ended up in the right place, and if your comment was mistakenly classified as spam (this has happened at least once and at most twice in the history of the blog), I will restore it to its rightful place in the sunshine.

In the past couple of days, I've gotten the same comment spam message twice. Curiously, Blogger correctly identified it as spam the first time but not the second time. It's a link to "Team PotentiaMED" web site. The information blurb on their site is long on meaningless buzz phrases and a bit short on specifics, but I think they are some sort of consulting operation. I'll let you judge their merits for yourself, if you're curious. Personally, I automatically assume that any business relying on spam to get customers probably lacks solid reasons to patronize them (the sort of reasons that would make for an effective conventional marketing campaign). I also don't trust anyone who spams.

By way of contrast, the proprietors of Online Engineering Degree contacted me by email to ask permission to post a comment pointing to one of their pages, a list of Q&A sites. Instead, I devoted a short post to providing the link, since (a) I thought it might be relevant to readers with an interest in industrial engineering and (b) it was not really relevant as a comment to any particular existing post. A writer from Masters in Engineering wrote to me asking if I would like to mention a page listing forums and boards for engineers. The sites listed were potentially useful to engineers in general but did not seem very useful to IEs, so I declined. I want to give both of them credit for doing things the right way.

Now if I can just find a way to report comment spammers to Blogger ...

Sunday, August 21, 2011

Piecewise-linear Functions Redux

A recent question on OR-Exchange about step functions in mathematical programs made me decide to update an earlier post about piecewise-linear functions.

In the prior post I dealt with continuous piecewise-linear functions (of a single variable) in mathematical programs. As a quick recap, if the function represents a diseconomy of scale (convex in the objective of a minimization problem or concave in the objective of a maximization problem), as in the first plot below, it can be modeled strictly with linear inequality constraints (no binary variables needed). Note that convexity implies continuity everywhere except possibly the endpoints (where you can have a jump discontinuity and still be convex or concave). The method I previously described requires continuity at the endpoints as well.

If the function is continuous but lacks the appropriate convexity/concavity, as in the next plot (which is neither convex nor concave), the all-inequality trick fails. It may also fail for convex or concave functions that appear in constraints, either because the convexity of the function does not match the direction of the inequality (you have $f(x)\ge b$ where $f()$ is convex or $f(x)\le b$ where $f()$ is concave), or because $f()$ is tied to other variables in the constraints in such a way that there is a diseconomy of scale to the direct contribution of $f()$ to the objective value but an economy of scale overall (overestimating the value of convex $f()$ or underestimating the value of concave $f()$ allows the other variables to take values that should be infeasible given the value of $x$). If $f()$ appears in any constraints and you are not absolutely certain that it matches the directions of the constraints in which it appears and represents an overall diseconomy of scale, treat it as an arbitrary continuous piecewise-linear function, as discussed next.

Again recapping the previous post, for an arbitrary continuous piecewise linear function $f(x)$ with break points $a_{1}\lt a_{2}\lt\cdots\lt a_{n+1}$ and values $f_{i}=f(a_{i})$ at the break points, we introduce continuous variables $\lambda_{1},\dots,\lambda_{n+1}$, add the constraint $\lambda_{1}+\cdots+\lambda_{n+1}=1$, and tell the solver that the $\lambda$ variables form an SOS2 set. We then add the constraint $x=a_{1}\lambda_{1}+\cdots+a_{n+1}\lambda_{n+1}$ and replace $y=f(x)$ with $y=f_{1}\lambda_{1}+\cdots+f_{n+1}\lambda_{n+1}$. If the solver does not understand SOS2 sets, it is necessary to introduce binary variables (as described in the previous post).

Step functions, such as the one plotted below, are not continuous, so nothing from the earlier post applies to them. Suppose we have break points $a_{i}$ as above, with $f(x)=f_{i}$ when $a_{i}\le x\lt a_{i+1}$. The first problem we confront is that mathematical programs abhor strict inequalities. To a mathematical programming solver, there is no difference between $x\lt a_{i+1}$ and $x\le a_{i+1}$ unless $x$ is a discrete variable (i.e., there is a largest possible value for $x$ strictly less than $a_{i+1}$. That will not be the case here, so we will simply have to deal with the fact that if the solver decides it wants $x=a_{i+1}$, it will set $f(x)$ arbitrarily to whichever of $f_{i}$ and $f_{i+1}$ makes for the better objective result.

To model a step function, we use an SOS1 rather than SOS2 set. Add binary variables $u_{1},\dots,u_{n}$ constrained by $u_{1}+\cdots+u_{n}=1$, declare them to be an SOS1 set, and include the constraints \begin{eqnarray*} \sum_{i=1}^{n}a_{i}u_{i} &\le & x &\le &\sum_{i=1}^{n}a_{i+1}u_{i}\\ y & &= && \sum_{i=1}^{n}f_{i}u_{i}. \end{eqnarray*} (Explicit declaration of the SOS1 set may not be necessary; some solvers recognize SOS1 sets automatically, and some may not exploit the useful properties of an SOS1 set even if the set is identified for them by the modeler.)

One last point: both the SOS1 and SOS2 methods require that $x$ have a finite upper bound ($a_{n+1}$). That upper bound will show up as a coefficient in the constraint matrix. The larger it is, the more likely the model is to be numerically unstable. So avoid the temptation to set $a_{n+1}$ equal to the largest floating point value your compiler can handle. Infinity is not your friend here.

Hmm. This is all a bit dry. Maybe a soundtrack would help?

In the prior post I dealt with continuous piecewise-linear functions (of a single variable) in mathematical programs. As a quick recap, if the function represents a diseconomy of scale (convex in the objective of a minimization problem or concave in the objective of a maximization problem), as in the first plot below, it can be modeled strictly with linear inequality constraints (no binary variables needed). Note that convexity implies continuity everywhere except possibly the endpoints (where you can have a jump discontinuity and still be convex or concave). The method I previously described requires continuity at the endpoints as well.

If the function is continuous but lacks the appropriate convexity/concavity, as in the next plot (which is neither convex nor concave), the all-inequality trick fails. It may also fail for convex or concave functions that appear in constraints, either because the convexity of the function does not match the direction of the inequality (you have $f(x)\ge b$ where $f()$ is convex or $f(x)\le b$ where $f()$ is concave), or because $f()$ is tied to other variables in the constraints in such a way that there is a diseconomy of scale to the direct contribution of $f()$ to the objective value but an economy of scale overall (overestimating the value of convex $f()$ or underestimating the value of concave $f()$ allows the other variables to take values that should be infeasible given the value of $x$). If $f()$ appears in any constraints and you are not absolutely certain that it matches the directions of the constraints in which it appears and represents an overall diseconomy of scale, treat it as an arbitrary continuous piecewise-linear function, as discussed next.

Again recapping the previous post, for an arbitrary continuous piecewise linear function $f(x)$ with break points $a_{1}\lt a_{2}\lt\cdots\lt a_{n+1}$ and values $f_{i}=f(a_{i})$ at the break points, we introduce continuous variables $\lambda_{1},\dots,\lambda_{n+1}$, add the constraint $\lambda_{1}+\cdots+\lambda_{n+1}=1$, and tell the solver that the $\lambda$ variables form an SOS2 set. We then add the constraint $x=a_{1}\lambda_{1}+\cdots+a_{n+1}\lambda_{n+1}$ and replace $y=f(x)$ with $y=f_{1}\lambda_{1}+\cdots+f_{n+1}\lambda_{n+1}$. If the solver does not understand SOS2 sets, it is necessary to introduce binary variables (as described in the previous post).

Step functions, such as the one plotted below, are not continuous, so nothing from the earlier post applies to them. Suppose we have break points $a_{i}$ as above, with $f(x)=f_{i}$ when $a_{i}\le x\lt a_{i+1}$. The first problem we confront is that mathematical programs abhor strict inequalities. To a mathematical programming solver, there is no difference between $x\lt a_{i+1}$ and $x\le a_{i+1}$ unless $x$ is a discrete variable (i.e., there is a largest possible value for $x$ strictly less than $a_{i+1}$. That will not be the case here, so we will simply have to deal with the fact that if the solver decides it wants $x=a_{i+1}$, it will set $f(x)$ arbitrarily to whichever of $f_{i}$ and $f_{i+1}$ makes for the better objective result.

To model a step function, we use an SOS1 rather than SOS2 set. Add binary variables $u_{1},\dots,u_{n}$ constrained by $u_{1}+\cdots+u_{n}=1$, declare them to be an SOS1 set, and include the constraints \begin{eqnarray*} \sum_{i=1}^{n}a_{i}u_{i} &\le & x &\le &\sum_{i=1}^{n}a_{i+1}u_{i}\\ y & &= && \sum_{i=1}^{n}f_{i}u_{i}. \end{eqnarray*} (Explicit declaration of the SOS1 set may not be necessary; some solvers recognize SOS1 sets automatically, and some may not exploit the useful properties of an SOS1 set even if the set is identified for them by the modeler.)

One last point: both the SOS1 and SOS2 methods require that $x$ have a finite upper bound ($a_{n+1}$). That upper bound will show up as a coefficient in the constraint matrix. The larger it is, the more likely the model is to be numerically unstable. So avoid the temptation to set $a_{n+1}$ equal to the largest floating point value your compiler can handle. Infinity is not your friend here.

Hmm. This is all a bit dry. Maybe a soundtrack would help?

Saturday, August 20, 2011

The Heisenberg Budget Principle

Watching Congress turn sirloin into (bad) hamburger during the recent borrowing limit debate/debacle, I realized that the Heisenberg Uncertainty Principle translates somewhat cleanly from particle physics to political economics:

Edit: I confess that I suck at physics. This probably is a manifestation of Einstein's special relativity rather than Heisenberg's uncertainty principle. First, it's not the act of observing the difference from a particular vantage point that makes it positive or negative; it's the location of the observer (and/or the observer's velocity toward the extreme of his/her party's ideology). Second, there is no uncertainty involved: one party is absolute, irrefutably certain the difference is positive; and the other party is equally certain the difference is negative. To admit a grain of doubt is to cause your party's bubble universe to collapse noisily and, worse still, to concede the possibility the other guys are right (this once).

The difference of two numbers can simultaneously have both positive and negative sign, depending on the position of the viewer (left or right side of the aisle).I don't think this actually works in the real universe, but in the bubble universe of Washington D.C. it appears to hold.

Edit: I confess that I suck at physics. This probably is a manifestation of Einstein's special relativity rather than Heisenberg's uncertainty principle. First, it's not the act of observing the difference from a particular vantage point that makes it positive or negative; it's the location of the observer (and/or the observer's velocity toward the extreme of his/her party's ideology). Second, there is no uncertainty involved: one party is absolute, irrefutably certain the difference is positive; and the other party is equally certain the difference is negative. To admit a grain of doubt is to cause your party's bubble universe to collapse noisily and, worse still, to concede the possibility the other guys are right (this once).

Tuesday, August 16, 2011

Spontaneous Reboots

Mint Katya (a derivative of Ubuntu Natty Narwhal) on my AMD-64 system has suddenly developed a penchant for spontaneously rebooting. So far, it has only happened while I'm typing. I think it has happened twice when I was typing messages in Thunderbird and once or perhaps twice when I was typing in the Yoono plug-in for Firefox. I don't recall any recent updates to T-bird, Firefox or Yoono, nor have I changed my keyboard (in case this is related to n-key rollover -- my fingers sometimes get ahead of my brain when I'm typing). The mosquitoes have gotten quite aggressive (and numerous) around my house; maybe they drove some gremlins indoors?

Update #1: I was sloppy above. It's not Mint that is rebooting; it's the X server. This is apparently a common problem,although the variety of symptoms posted there suggest that this is in fact multiple distinct bugs with a common end result (X crashing). Things I have discovered:

Update #3: See this post for earlier problems and this post for a later round of nVidia issues (and how I resolved them).

Update #1: I was sloppy above. It's not Mint that is rebooting; it's the X server. This is apparently a common problem,although the variety of symptoms posted there suggest that this is in fact multiple distinct bugs with a common end result (X crashing). Things I have discovered:

- Unlike several of those reports, I have not experienced any crashes as a result of clicking. Only typing triggers a crash.

- Unlike the reports from laptop users, power management is not the culprit (the machine that crashes for me is a desktop).

- Someone pointed the finger at running the AMD-64 version of Natty on an Intel CPU. My copy of Mint is in fact based on the AMD-64 Natty, but my box has an AMD-64 CPU.

- Turning the proprietary nVidia driver off and then on did not help. (I have a GPU from nVidia.)

- Installing xserver-xorg-video-nv did not help.

Update #3: See this post for earlier problems and this post for a later round of nVidia issues (and how I resolved them).

Friday, August 12, 2011

Q&A Sites for Engineers

One of the authors at Online Engineering Degree asked me to mention an article there: 25 Q&A Sites for Engineers. It's a compendium of links to exactly what the title describes. I'm not sure anyone reading this blog will be interested (industrial engineering does not seem to get much play among the sites listed), but I'm happy to pass the link along.

Saturday, August 6, 2011

Tab Completion Bug in Mint 11

This is really a tab completion bug in Ubuntu 11.04 - and may actually be a pair of bugs (I'm not sure), one not the fault of Ubuntu. Details can be found in this bug report. The short form is that command line tab completion (where you start typing a path and hit TAB to complete it) started breaking down on one of my Mint machines. Hitting TAB would add an unwanted space at the end of the path (forcing me to backspace if I were not yet done) and also failed to escape spaces. In fact, if I typed the portion of the path containing the space and escaped it, then hit TAB, the escape character (backslash) would be removed.

The bug report contains two possible fixes. As it happens, I had started out with Acrobat Reader 9 installed from the repository on this machine, then replaced it with the version downloaded from Adobe's web site. This is my 64 bit box, and there's an issue with Acrobat Reader looking for 32 bit versions of libraries (which I have installed), finding the 64 bit versions instead, and tripping over them. I thought maybe using the Adobe version would fix the problem. (It did not.)

At any rate, I tried one of the two suggestions in the bug report: deleting /etc/bash_

The bug report contains two possible fixes. As it happens, I had started out with Acrobat Reader 9 installed from the repository on this machine, then replaced it with the version downloaded from Adobe's web site. This is my 64 bit box, and there's an issue with Acrobat Reader looking for 32 bit versions of libraries (which I have installed), finding the 64 bit versions instead, and tripping over them. I thought maybe using the Adobe version would fix the problem. (It did not.)

At any rate, I tried one of the two suggestions in the bug report: deleting /etc/bash_

Thursday, August 4, 2011

A GNOME Panel Hack

Linux Mint 11 (Katya), built on Ubuntu 11.04 (Natty Narwhal), continues to act goofy at times, notably with intermittent boot failures on both my home PC (AMD 64 bit quad-core) and my laptop (Intel 32 bit dual-core). On the desktop, when a boot fails I get a set of messages that stop abruptly; the failure point is a bit random (sometimes it stops after the battery check, sometimes it goes deeper). On the laptop, the screen stays blank; two of the LED indicators (either numeric lock and caps lock or caps lock and scroll lock; I forget which pair) blink in unison. On both machines, rebooting eventually succeeds.

Another intermittent problem has to do with the GNOME panel. Again on both machines, I sometimes get a message that one of the panel components failed to load (sometimes the indicator applet, sometimes the clock applet, this morning the Mint Menu button). I'm asked if I want to delete it, to which I always reply in the negative.

Killing the GNOME panel will cause it to spontaneously regenerate, and usually it regenerates correctly. (Occasionally it needs to be killed a second, or even third, time.) So I cobbled together a menu entry to do this. I put a file named Fix\ Panel.desktop into ~/.local/share/applications. The contents of the file are listed below. Now all I have to do is click the Menu button, find "Fix Panel" and click it to (hopefully) repair the panel.

Another intermittent problem has to do with the GNOME panel. Again on both machines, I sometimes get a message that one of the panel components failed to load (sometimes the indicator applet, sometimes the clock applet, this morning the Mint Menu button). I'm asked if I want to delete it, to which I always reply in the negative.

Killing the GNOME panel will cause it to spontaneously regenerate, and usually it regenerates correctly. (Occasionally it needs to be killed a second, or even third, time.) So I cobbled together a menu entry to do this. I put a file named Fix\ Panel.desktop into ~/.local/share/applications. The contents of the file are listed below. Now all I have to do is click the Menu button, find "Fix Panel" and click it to (hopefully) repair the panel.

#!/usr/bin/env xdg-open [Desktop Entry] Version=1.0 Type=Application Terminal=false Icon[en_US]=gnome-panel-launcher Name[en_US]=Fix Panel Exec=killall gnome-panel Comment[en_US]=Restarts GNOME panel if it's glitched Name=Fix Panel Comment=Restarts GNOME panel if it's glitched Icon=gnome-panel-launcher

Sunday, July 31, 2011

The Curse of Basic Numeracy (or Why I Keep Gaining Weight)

Like all O.R. people (I hope!), and perhaps 2% of political officeholders in the U.S. (fewer if you restrict the count to Congress), I am functionally numerate. In particular, when ordering food at restaurants or high-calorie beverages (like the one I'm slurping as I type this) at coffee shops, I recognize when multiple sizes of the same order are priced so as to give the consumer an economy of scale. For instance, the price of the frozen mocha in front of me comes from the following table:

There is a modest diseconomy of scale in marginal cost going from the 20 oz. size to the 24 oz. size, the reason for which eludes me. That in turn bothers me at one level (I teach in a business school, so I feel that I should understand pricing models) and not at another (I occasionally fly on commercial airlines, whose ticket pricing algorithms appear to be the first "practical" application of chaos theory).

Obviously, being an O.R. person (and a business prof at that), I was compelled to order either the 20 oz. size (best marginal cost) or the 24 oz. size (best average cost). Thus do I, being perfectly rational, give the locker room scale one more reason to shudder when I walk in the door. I did order a reduced-calorie version, which is somewhat like playing stickball on a busy one-way street as opposed to a busy two-way street.

Update: I'm now sitting in another coffee shop, where I buy beans. They have a pretty sweet deal: buy a pound of beans (slightly more expensive bargain brands at the local supermarket, but not much more) and get a free drink, any type, any size. I'm not sure exactly how many ounces in this drink, but the fact that it has its own lifeguard on duty is not a good sign. I truly do not want to think about how many calories it contains.

Size (oz.) | Price (USD) | Marginal Cost (USD/oz.) | Average Cost (USD/oz.) |

| 10 | 3.75 | 0.375 | 0.3750 |

| 16 | 4.35 | 0.100 | 0.2719 |

| 20 | 4.65 | 0.075 | 0.2325 |

| 24 | 5.05 | 0.100 | 0.2104 |

There is a modest diseconomy of scale in marginal cost going from the 20 oz. size to the 24 oz. size, the reason for which eludes me. That in turn bothers me at one level (I teach in a business school, so I feel that I should understand pricing models) and not at another (I occasionally fly on commercial airlines, whose ticket pricing algorithms appear to be the first "practical" application of chaos theory).

Obviously, being an O.R. person (and a business prof at that), I was compelled to order either the 20 oz. size (best marginal cost) or the 24 oz. size (best average cost). Thus do I, being perfectly rational, give the locker room scale one more reason to shudder when I walk in the door. I did order a reduced-calorie version, which is somewhat like playing stickball on a busy one-way street as opposed to a busy two-way street.

Update: I'm now sitting in another coffee shop, where I buy beans. They have a pretty sweet deal: buy a pound of beans (slightly more expensive bargain brands at the local supermarket, but not much more) and get a free drink, any type, any size. I'm not sure exactly how many ounces in this drink, but the fact that it has its own lifeguard on duty is not a good sign. I truly do not want to think about how many calories it contains.

Tuesday, July 26, 2011

Princeton Consultants Is Hiring

This is a first for me. I received an email this morning for someone at Princeton Consultants, asking if it would appropriate to list some job openings on the blog. Given the current unemployment rate in the U.S., who am I to say no -- especially since their name is "Princeton Consultants" and that they are headquartered in Princeton, NJ (although as far as I know are unaffiliated with Princeton University).

Quoting the message I got:

Quoting the message I got:

Our firm, Princeton Consultants, does IT and Management Consulting (optimization, systems integration, business process improvement, etc.) for a variety of Fortune 500 companies. We are currently looking for 10-15 new employees to start immediately as an Associate, Senior Associate or Analyst.These are apparently all full-time positions. Interested readers can learn more about the company from their careers page, and can find a brief application form on their site. If you know any OR types in the job market, please pass the word to them.

Monday, July 25, 2011

USENET: A Remembrance

Although I've already submitted a post to the July INFORMS blog challenge ("O.R. and Social Networking"), I cannot let the topic go by without a (an?) homage to sci.op-research. It is probably premature (albeit barely) to write an epitaph for USENET: in fact, its traffic apparently continues to grow, and where once you needed access to a USENET server, now much of it is accessible via Google Groups.